In November 2017, former GGE Chair, Ambassador Amandeep Singh Gill, circulated a paper asking an informed and important question regarding the possibility that potential LAWS could be accommodated under current military command and control. The U.S. delegation responded with an unequivocal yes, noting,

(c)ommanders must authorize the use of lethal force against an authorized targeted military objective. That authorization is made within the bounds established by the rules of engagement (ROE) and international humanitarian law (IHL) based on the commander’s understanding of the tactical situation informed by his or her training and experience, the weapon system’s performance informed by extensive weapons testing, as well as operational experience and employment of tactics, techniques, and procedures for that weapon. In all cases, the commander is accountable and has the responsibility for authorizing weapon release in accordance with IHL. Humans do and must play a role in authorizing the use of lethal force.

In contrast to the response of the U.S. delegation, some States and civil society have expressed concern that the employment of LAWS would lead to unintended consequences such as rogue machines, indiscriminate targeting and machine learning contrary to the programmed mission. While those concerns should be addressed, all but a few technologically capable States appreciate and anticipate the risks related to LAWS during the research, development and fielding stages of the weapon. All weapon systems used in armed conflict must be employed in a manner that complies with IHL and undergo a robust weapons review process. LAWS would be no different. In the United States, and other States, potential LAWS and all other weapon systems undergo comprehensive and proactive review processes to ensure compliance with the intended operation of the system, to prevent compromise and to ensure compliance with IHL.

This research and development process includes the implementation of risk mitigation in weapons systems—a common State practice in the international community. There is every reason to believe that LAWS development will undergo the same—if not more—scrutiny. For example, the U.S. government is currently researching and developing autonomous weapon systems with manual or automatic safeguards or locks in the case of weapon malfunction, the possibility of human intervention during operations, and real-time operating systems status updates provided to commanders. These risk mitigation measures would allow the machine or commander to intervene and disable the weapon or end the mission if malfunction becomes a possibility.

While these machine-based safeguards will be utilized to ensure that autonomous weapons do not operate outside the parameters of a particular mission, the employment of the LAWS in a manner consistent with IHL will be the ultimate responsibility of the commander, not the machine or the research and design teams developing the safeguards. Unlike machines, commanders and operators have an obligation to comply with IHL. They are held accountable for their decisions to use force regardless of the nature or type of weapon system utilized.

Unfortunately for the progress of the GGE, some States and NGOs (non-governmental organizations) center their objection to LAWS development on the erroneous belief that autonomy will become both the surrogate and scapegoat for the commander. This belief is misguided. To be clear, as with all weapons systems, States must hold commanders and operators accountable for weapons release and the consequences of any weapons engagement. The weapon or machine is not, nor will it ever be, the accountability proxy for willful or reckless noncompliance with IHL.

Most CCW States appreciate the inherent challenges with the employment of potential LAWS and it should be noted that this focus and fear are concentrated in a small number of States. However, some of this minority demonstrates an unclear understanding of the basic operating principles of emerging technologies and the integration of autonomous functions into weapons systems. Nor do they have the technological capabilities to develop or employ these systems. Even more concerning is a select group of NGOs who seem to be fueling States’ fears with an equally misguided focus and incomplete understanding, or worse, a selective ignorance, of the technology.

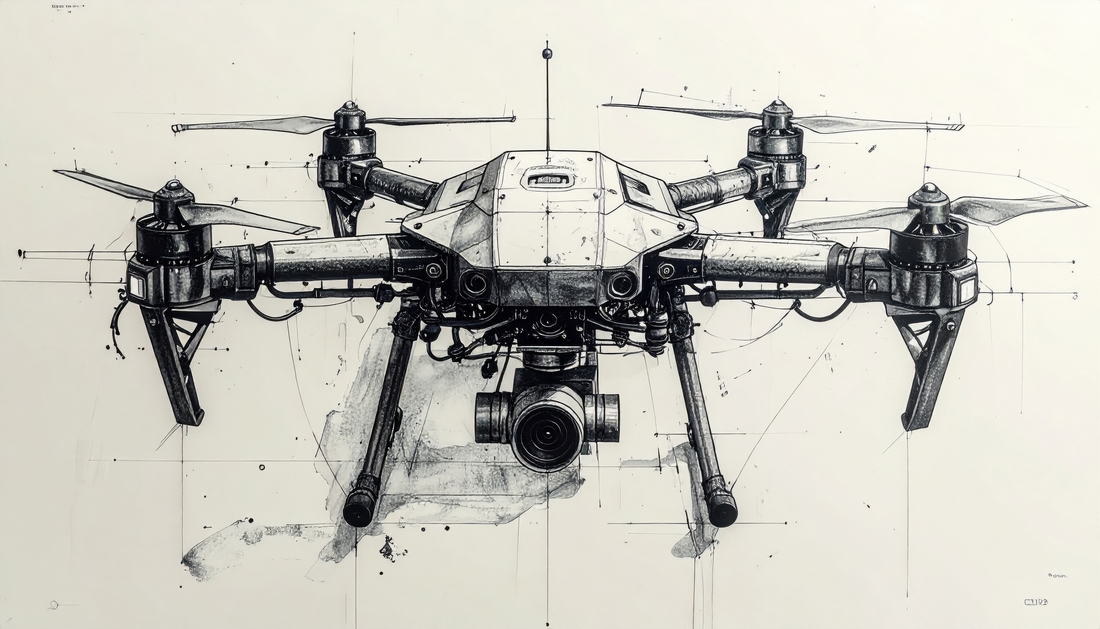

Misguided focus on the machine has resulted in a sometimes fantastical view of autonomy in weapon systems. The vital legal and ethical questions are not machine-focused, but rather are centered on how the human employs the machine and the human’s intended consequences with that employment. The key issue for ‘human-machine interaction in emerging technologies in the area of LAWS’ is ensuring that machines help effectuate the intent of commanders and the operators of weapon systems. Practically speaking, can the human operator rely on the prospective weapon system to select and engage targets while complying with IHL? The answer to that question is a categorical yes. This can be done by continuing to employ a weapon system development process, the outcomes of which are in strict compliance with IHL as well as domestic law and policy, and by trusting our commanders to employ these weapon systems with the same scrutiny. And how do commanders and researchers and developers and roboticists achieve that purpose? Below is a brief discussion of autonomy in current systems and how those systems will better ensure appropriate levels of human judgment and help minimize unintended engagements and collateral damage.

Consider a scenario in which indirect fires are being used to engage an enemy tank in open terrain. In this type of targeting scenario, we have seen a technological progression from unguided or ‘dumb’ munitions, to laser guidance systems, GPS guidance and inertial guidance systems. Recent advances include sensor-fused munitions with the addition of camera systems, infrared homing, radar-tracking and pattern detection. For example, the Swedish BONUS round uses a 155mm artillery shell to deliver two sub-munitions with the ability to scan multiple frequencies in the infrared spectrum and compare detected vehicles to a programmable target database. Once it detects a vehicle that is mirrored in the target database, the BONUS round will engage that target. If the vehicle is not in the database, the vehicle will not be engaged. Unlike traditional munitions, the BONUS round is able to adjust to a moving target such as a tank. If no targets from the database are identified, the BONUS round will self-destruct to avoid causing collateral damage or leaving unexploded ordnance on the battlefield. Other advanced sensor-fused munitions could render themselves inert if the system detected, for example, a medical vehicle instead of a tank. Human control is extended by the Swedish BONUS sensor-fused round, in that the BONUS system allows the commander to adjust the terminal guidance system to strike only the intended target.

In addition to munitions such as the BONUS round that employ autonomy, targeting systems on ground vehicles such as a tank or light armored vehicle could benefit from increased target classification capabilities enabled with automated features. Such systems use machine learning algorithms to seek, acquire and properly identify valid targets with a higher degree of speed and accuracy than conventional systems. Bounding boxes—a three-dimensional boxthat fully encloses a digital image detected by the system—could alert crew members to potential threats and prioritize those threats while eliminating non-military vehicles as potential targets. Additionally, intelligent fire control systems use combined multi-spectral sensors from various platforms to better synchronize effects and reduce the chance of collateral damage. Situations in which humans are challenged to make split-second decisions or in which humans no longer have the ability to influence the effects of a weapon are exactly those in which autonomy or artificial intelligence (AI) could significantly improve the accuracy and efficiency of decision making. Autonomy and AI are already achieving more desirable outcomes in terms of distinction, proportionality and the protection of non-combatants and civilians. Continued research into and employment of these technologies will enhance IHL compliance and reduce collateral damage.

The systems outlined above are developed in strict compliance with IHL and undergo an extensive weapons review process. Essential to the design of the BONUS round and the other systems mentioned above is the idea that commanders and operators—and the international community—can rely on the autonomous characteristics in these systems to engage targets within the parameters of the IHL concepts of distinction and proportionality. In IHL compliant countries, if weapons developers cannot field equally reliable autonomy-enabled weapon systems, the weapon systems will never see the battlefield, and, to be sure, commanders will not employ those weapons in combat.

GGE HCPs and civil society must come to understand that munitions such as a BONUS round properly employed by a commander and other similar technologies are the current and future of LAWS, not killer robots detached from command and control. The skeptical participants in the LAWS GGE need to move past their fantastical view of autonomous weapons and the associated misguided approach to accountability. Once this occurs, the Group can move toward real progress with the issue of autonomy in weapon systems and, in turn, foster greater compliance with IHL and improved humanitarian outcomes.

DISCLAIMER: Posts and discussion on the Humanitarian Law & Policy blog may not be interpreted as positioning the ICRC in any way, nor does the blog’s content amount to formal policy or doctrine, unless specifically indicated.

Comments