Earlier this month, a viral story alleged that a US Air Force simulation took place during which an “AI drone” “attacked” its human operator when they interfered with the targeting objectives set for the drone. The story was later retracted when the US Air Force source clarified that the scenario described had been a “thought experiment”. But “drones” that integrate automated, autonomous and AI technologies in targeting and mobility functions already exist – for example, in the form of loitering munitions that have been used in many recent conflicts, such as those in Libya, Nagorno-Karabakh, Syria and Ukraine.

In this post, Dr. Ingvild Bode, Associate Professor at the Center for War Studies (University of Southern Denmark) and Dr. Tom Watts, Leverhulme Trust Early Career Research Fellow (Royal Holloway, University of London) argue that such loitering munitions set problematic precedents for human control over the use of force and underline the urgent need for legally binding rules on autonomous weapon systems (AWS).

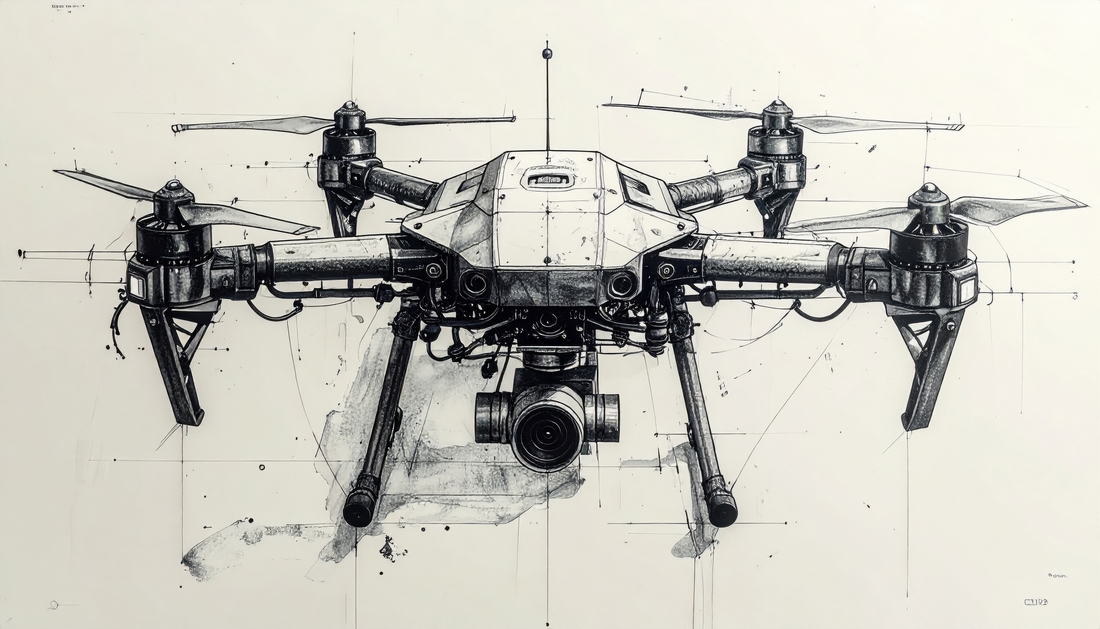

Loitering munitions are a distinct category of expendable uncrewed aircraft that can integrate sensor-based analysis to hover over, detect, and explode into targets. Whilst not all these systems may have this technical capability to help lower production costs, some loitering munitions can integrate automated, autonomous, and AI technologies into targeting and mobility functions. The Israel Aerospace Industries Harpy, for example, is widely considered as being an example of an autonomous weapon system (AWS) capable of automatically applying force via sensor-based targeting without human intervention.

Loitering munitions have seen rapid proliferation over the past years. By mid-2022, nearly 24 states produced these weapons, more than doubling from less than 10 in 2017. The global market for these weapons is forecast to grow significantly in the coming years – a dynamic driven, in part, by the widespread fielding of these weapons in Ukraine. Worryingly, as discussed in the conclusion of this post, the global development and diffusion of these weapons is far outpacing the stalling global regulatory debates on AWS centred around the Group of Governmental Experts (GGE) of the Convention on Certain Conventional Weapons (CCW).

Most manufacturers of current loitering munitions argue that such systems work with a “human-in-the-loop”. This means, typically, that human operators monitor the platform’s operation via a two-way datalink and must authorise the release of force. Therefore, loitering munitions appear not to be currently used as AWS. As our recently published report demonstrates, however, practices of designing and using such systems show us the direction of travel when it comes to integrating autonomous and AI technologies into existing categories of weapon systems.

Problematic consequences of loitering munitions that use autonomous technologies in targeting

Our research suggests that there are at least three potentially problematic consequences associated with loitering munitions deserving of scrutiny.

First, integrating automated, autonomous, and AI technologies into the targeting functions of loitering munitions appear to have created significant uncertainty regarding when and where force is used. This uncertainty also extends to how precisely human operators may exert control over specific targeting decisions. While most manufacturers characterize the release of force as remaining under the control of a human operator, some manufacturers also allude to the potential technological capability of the systems to launch attacks on targets without human intervention.

A closer look at the Kargu-2, a type of loitering munition that has drawn significant attention, illustrates this point. In March 2021, a report authored by a UN Panel of Experts on Libya appeared to characterise the Kargu-2 as a lethal AWS. The report argued that, in March 2020, forces affiliated with the Libyan Government had used the Kargu-2 to attack militias autonomously, that is without human supervision or intervention. The system’s manufacturer, STM, disagreed, noting that the platform’s missions are “fully performed by the operator, in line with the Man-in-the-Loop principle”. However, prior to the publication of the UN report, STM had advertised the Kargu-2 in very different terms. Then, the system was argued to possess “both autonomous and manual modes,” and having been designed to utilise “real-time image processing capabilities and deep learning algorithms”.

The fact that some loitering munitions appear to have been designed with a latent capability to engage in sensor-based targeting without human assessment is noteworthy. Under stressful and rapidly changing combat conditions, it is possible that humans may uncritically trust the system’s outputs. This is a finding suggested by investigations on automation bias/over-trust. Also, in certain situations, human operators may lack the sufficient situational awareness to doubt the platforms’ suggested targets. Further, in the absence of specific legally binding rules on autonomy in weapon systems, having access to an even latent capability could mean that conflict parties may, eventually, come to use it to gain a perceived battlefield advantage. According to Wahid Nawabi, CEO of AeroVironment which manufactures the Switchblade 300 and Switchblade 600 loitering munitions fielded by the US military and some of its allies, for example: “The technology to achieve a fully autonomous mission with Switchblade pretty much exists today”.

Moreover, the uncertainty concerning how human control is exercised over these systems extends to what is knowable about loitering munitions based on open-source data. Much of the available information on the technological capabilities of such systems is drawn from a limited array of sources, often associated with the manufacturers of these weapons. As the example of the Kargu-2 demonstrates, this data is prone to sustain particular (commercial) agendas. This poses significant challenges to building critical (public) awareness of the direction that the development of autonomy in weapon systems is taking.

Use of loitering munitions as anti-personnel weapons

Second, loitering munitions have been designed and fielded against a wide variety of objects and as anti-personnel weapons, including in populated areas. This speaks to significant changes in the global development of such platforms. The earliest loitering munitions, such as the IAI Harpy, were principally designed to search out and destroy radar systems. Systems in use now, such as the Russian-manufactured Lancet-3, can attack various types of objects, some of which may not be military objectives by nature.

There are also loitering munitions designed specifically to target personnel and intended for use in populated areas. Of the 24 loitering munitions examined in the report and its accompanying data catalogue, 14 have anti-personnel target profiles and 18 are advertised for use in populated areas. This puts civilians and civilian objects at risk of being unlawfully targeted. In fact, the very design of loitering munitions with anti-personnel target profiles contrasts with ethical principles of humanity and compliance with international humanitarian law (IHL), such as distinguishing between civilians and combatants.

Third, as highly mobile systems, loitering munitions have been used over wider areas for longer periods. At the point of launch and when the platforms are in the air, the precise target is unclear. Current anti-personnel loitering munitions have an operational endurance of between 15 minutes and six hours and a range between five and 50km. The geographical area within which an attack might happen therefore becomes large. This creates more spatial and temporal distance between humans and their exercise of deliberative judgement from the use of force. These dynamics may also potentially result in indiscriminate and wide area effects, especially if we consider that some loitering munitions, including the Kargu-2, appear to be capable of being equipped with thermobaric warheads.

In sum, the development and use trajectory of loitering munitions illustrates how integrating autonomous and AI technologies into targeting generates new and significant uncertainties regarding where, when, against whom, and under what conditions force is used.

Loitering munitions and regulating AWS

The problematic tendencies associated with loitering munitions underline the urgent need for states to develop and adopt legally binding international rules on autonomy in weapon systems. Yet, the international debate on AWS remains slow-moving. Last month, the most recent meeting of the GGE ended with a final report that was, once again, disappointing to many of the stakeholders involved. This belies the fact that debates at the CCW this year were substantive and showed increasing state convergence around the two-tiered approach. The two-tiered approach seeks to (1) prohibit LAWS that can be operated without human control, while (2) regulating the design and use of autonomy in weapon systems, including through safeguarding human control and ensuring that the use of force remains predictable and understandable. But because the CCW operates by consensus, states that prefer voluntary measures and those that seek to obstruct hamper progress.

We can expect the governance process of AWS to be a lengthy one, especially when it comes to negotiating a much-needed international legally binding instrument. Regulatory momentum has been building and in-depth examinations of existing weapons can help to sustain this. As the global trajectory of loitering munitions demonstrates, we do not need to go to dystopian sci-fi narratives to imagine potential problems associated with AWS. There are already problems at hand in how states design and use weapon systems integrating autonomous technologies in targeting in particular ways. The international regulatory debate on AWS should pay more attention to these practices. Often, if practices related to existing weapon systems are talked about, they are taken to represent “best practices”. But as the case of loitering munitions demonstrates, current practices can also show what is problematic about autonomous technologies in warfare and what should, therefore, be expressly prohibited, regulated, governed, or steered. International stakeholders need to urgently go beyond the “wait and see approach” if we want to avoid highly problematic consequences.

Author’s note: The arguments in this post are based on the authors’ recently published policy report, “Loitering Munitions and Unpredictability: Autonomy in Weapon Systems and Challenges to Human Control” which can be accessed here.

Research for the report was supported by funding from the European Union’s Horizon 2020 research and innovation programme (under grant agreement No. 852123, AutoNorms project) and from the Joseph Rowntree Charitable Trust. Tom Watts’ revisions to this report were supported by the funding provided by his Leverhulme Trust Early Career Research Fellowship (ECF-2022-135). We also collaborated with Article 36 in writing the report.

See also:

- Eirini Giorgou, “Preventing and eradicating the deadly legacy of explosive remnants of war”, February 23, 2023

- Faine Greenwood, “Consumer drones in conflict: where do they fit into IHL?”, March 15, 2022

- Frank Sauer, “Autonomy in weapons systems: playing catch up with technology”, September 29, 2021

A very well articulated narrative on an extremely relevant topic. My understanding of loitering munitions is that once launched/ deployed, they cannot be recovered. This means they need to find a target within their endurance period (which should have been decided before launch), else a self destruct mode is activated. However many operators and/ or planners may not use the option of self destruct, since it points towards poor planning , leading to loss of a valuable asset (AWS). To obviate this situation, operators may resort to incorrect and maybe illegal targeting . Hence, use of loitering munitions needs very careful regulation with respect to manufacture, employment methodology and training.